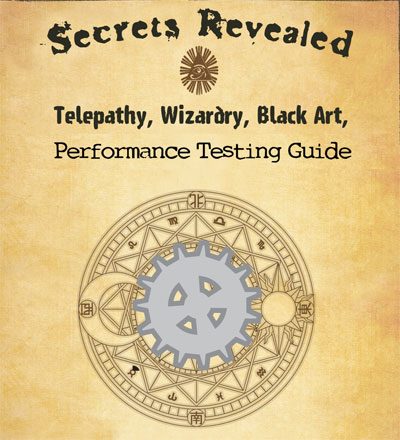

How to Start Performance Testing (The Black Art Revealed)

Performance Testing

Last week I had the pleasure of speaking with Scott Moore about performance testing and LoadRunner in Episode 38 of TestTalks. The following are the top things I learned from the interview:

What is Performance Testing?

I think performance testing is one of those topics that are misunderstood by most teams. It’s important that before you start a new performance project you get everyone on the same page with the terms and the understanding of what is most important for your project.

Performance testing has multiple types of tests within its definition. One of the most commonly misunderstood concepts is Load testing vs Stress testing.

To help clarify the difference between the two Scott recommends that you choose which approach you are going to take and focus on one of these three areas:

- The end user experience

- The hardware performance

- The software and how it scales

If you can break it down into one of these areas and find out which one is most important to the client, it goes a long way towards knowing how to position your tests.

This helps you to determine if you need to do a Load or Stress Test.

Load vs Stress Testing

One of the dangers of performance testing is that the scenarios used in the test are unrealistic.

When creating a load test you should focus on a real world, “perfect storm” type of situation — but something that could actually happen out in the wild once your product is released.

A stress test is really about finding the first point of failure. Often you don’t have the luxury of adding multiple configurations, multiple servers and trying different load balancers unless you’re in some kind of lab situation.

90% of the time, teams are looking to create a load matrix that looks like what a really bad day or bad hour would look like, and determining what the end user is going to experience. Other questions are whether something is going to fall over on the hardware, or whether the software is going to work under that load. Once you get those definitions out of the way, you can go from there.

One of your main jobs is also to create a realistic performance test that allows business decisions to be made. To this end, you need some sort of service-level agreement to measure against, like:

- How many users?

- How many transactions per hour?

- What should the user experience be when we say it’s bad or good? Maybe it’s eight seconds externally across the WAN; maybe it’s three seconds internally.

You need to define what “good performance” means for your application so you can test against those SLAs. Without concrete pass/fail performance criteria, how will you know when the performance testing is done?

Performance Tools for your craft

Once you have your terms defined, everyone is on the same page, expectations are set you and you know what your SLAs are, you will need a tool to help with your performance conjuring.

Scott makes no bones about it — he loves LoadRunner — and with 50 of their FREE virtual user licenses it is even better than before. Because of these, most small and medium organizations can get their hands on a real enterprise level tool. (If you need more than 50 users, LoadRunner also has an option where you can lease or rent X amount of additional users for a set amount of time — helping to lower your overall ownership cost.)

LoadRunner also still has one of the best Analysis and Reporting engines out there, which allows you to get lots of good decision making information out of your performance tests. It also allows you to come up with graphs that don’t have too much information and makes sense to your managers.

It’s critical to break out a lot of your monitor graphs and choose key metrics to cross together that can tell the business where the application is having a problem, where the area is, and provides the proof of same.

Learning your Performance Testing craft

Once you have your tool, you need to learn it. If you are new to performance testing and have mainly been a functional tester, the learning curve to get up-to-speed on performance testing can be steep because of all of the varied technical knowledge you need to have.

For example, web technology or just understanding how to read HTML and what’s going on with that page once it passed through HTTP.

What is a proxy and how LoadRunner does what it does to grab all that traffic and make sense of it and what is correlation. These are the types of things Scott has seen over the years when training people on LoadRunner.

Because of this learning curve, I’m always amazed when a team thinks they can start doing meaningful performance testing without having any training.

Companies really need to invest in their people and train them on how to use the product that they choose for performance testing to get this information. It is especially critical because the information you get back from a performance test can make or break your decision whether or not to ship your product.

So it’s really important that you have someone that knows that they’re doing and honestly, most testers and developers do not make good performance engineers. You really need someone with a unique set of skills that actually has a performance testing background.

Having someone with a performance engineering mindset from the beginning, that’s engineering performance into the product and understands the different areas that need to be looked at.

If you do not have an experienced performance lead in place, be sure to get the training that you need.

Once you have your tool selected and have some training under your belt, you should be able to begin your performance efforts.

Agile Performance Testing

You should start performance testing as early as possible. It’s much harder to change an application’s architecture once your software has already been developed, so be sure it’s done before you start doing any kind of performance analysis.

One way to do this is to start as early as when the technical specs are being written. That way you can bake performance criteria into your design from the start. You can also use performance testing to help shake out the different types of technologies that you are deciding between.

I’ve done this in the past a technical spike in a sprint to act as a little proof of concept to test the different configurations and compare the performance numbers.

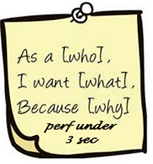

If your work in an Agile shop you should make anyone putting a user story down on a Post-It note or in a tool like Rally also includes a performance requirement on it.

The earlier you can start addressing performance the better. Of course, the scripting is the easy part. What metric/monitors should you look for when running your performance tests?

Beware of these performance stumbling blocks

It’s easy to get carried away with performance monitoring. It’s tempting to just turn everything on and think you are being thorough. But over- monitoring can cause a situation where you think your systems are performing poorly simply because you are monitoring too much too often. To start with, it’s best to stick with the four basic types of monitor areas:

- CPU

- Memory

- Disk

- Network

Choose a few key performance indicators in each of these areas, and start with that. When you see a particular area of your application begin to crumble under the load, then you can do a deep dive into that area. Start with the basics and dive deep when you suspect a particular area.

Another area you should guard against is not mimicking how your real users interact with your system. I’ve seen some applications burst into flames because someone starts hammering against a system with just a few virtual users going as fast as possible. These performance tests end up producing more transactions in a few minutes than would ever be created in a typical day.

This is not how you should be doing load testing. Once you get over 1.5 of 2x of what a realistic user would process on a system it really turns into more of a stress test than a load test.

Always make one virtual user behave like 1 real user

Cast your Performance Testing Spell

Using Scott’s advice you should be able to get started creating performance awesomeness and have your company completely under your performance spell.

To hear more performance invocations, listen to TestTalks 39: Scott Moore: Getting Started with Performance Testing and LoadRunner

Joe Colantonio is the founder of TestGuild, an industry-leading platform for automation testing and software testing tools. With over 25 years of hands-on experience, he has worked with top enterprise companies, helped develop early test automation tools and frameworks, and runs the largest online automation testing conference, Automation Guild.

Joe is also the author of Automation Awesomeness: 260 Actionable Affirmations To Improve Your QA & Automation Testing Skills and the host of the TestGuild podcast, which he has released weekly since 2014, making it the longest-running podcast dedicated to automation testing. Over the years, he has interviewed top thought leaders in DevOps, AI-driven test automation, and software quality, shaping the conversation in the industry.

With a reach of over 400,000 across his YouTube channel, LinkedIn, email list, and other social channels, Joe’s insights impact thousands of testers and engineers worldwide.

He has worked with some of the top companies in software testing and automation, including Tricentis, Keysight, Applitools, and BrowserStack, as sponsors and partners, helping them connect with the right audience in the automation testing space.

Follow him on LinkedIn or check out more at TestGuild.com.

Related Posts

Look, I’ve been doing automation testing including performance testing for over 25 years. And in that time, the #1 question […]

Look, performance testing has the biggest gap between “we should really do that” and “we actually do that” of any […]

Regarding e-commerce, Black Friday is the ultimate test of endurance. It’s one of those days of the year, along with […]

DevOps Toolchain Podcast with Joe Colantonio With the current demand for software teams to high quality product in a timely […]