Don’t be a pervert – 6 ways to keep your BDD clean

Maybe it’s just me, but I have a feeling that my team (and many other companies) are perverting the intent of Behavior Driven Development (BDD).

I’ve spoken with many BDD thought leaders like Matt Wynne, Seb Rose and Lance Kind over the past year on my TestTalks podcast, and found that what they recommend and what I often see are at odds.

Based on what I’ve gleaned from these interviews as well as my own experience working on a BDD project for over two years, here are 6 suggestions for keeping your BDDs clean:

- Keep your BDD implementation-free

- It’s about all about conversations

- Automation is a side benefit — not the reason to do BDD

- Don’t make everything a UI test

- A scenario is not a requirement

- Just because you’re doing Scrum does not mean you’re doing Agile

Keep your BDD implementation-free

One way to make your BDD hard to maintain and read is to include implementation detail in your BDD scenarios.

You should keep your BDD implementation-free, and instead, focus on what your user wants to do — not how. This is important because you should always be thinking about the users of your application. “Perverts” tend to use lots of unnecessary implementation details like button clicks and dropdown information that users don’t really care about. I always feel disgusted when I see low-level info like this in a team’s .feature file, because it shows that they’re not having the critical discussions they need to in order to ensure that they are developing the functionality the application’s users are actually going to want.

Lance Kind had a unique steampunk-like take on how to keep his BDD .feature files implementation-free. He asks himself, “Can I implement this behavior using steam?”

For example, if I have a Given/When/Then that has button and mouse clicks, I obviously need a mouse, which means I can’t use steam technology.

However, if the behavior that you are describing is implementation detail-free, then you could make it work using steam, magic or whatever technology you wish.

Thinking of this example while you’re creating your scenarios should help you not to include lower-level implementation details.

I’ve found that the main cause for this type of behavior is that a team is probably trying to use BDD as an automation framework — not as a collaboration tool. But BDD does not equal test automation.

Automation is a side benefit; not the reason to do BDD

Seb Rose believes that the most important thing about BDD tools like Specflow and Cucumber is that is at their heart they are actually tools that support collaboration.

If you are not able to collaborate with somebody who’s responsible for the customer or business side, then please don’t try using a tool like Cucumber for a test automation product. Seb goes on to say, “If you want to do test automation, go and get a dedicated test automation tool. I don’t think you’ll have any success, but don’t use Cucumber, because I know that we will not be supporting you in a way that you want it to work. It is designed as a collaboration tool, or to support collaboration.”

Treating your BDD as automation is an anti-pattern. So anytime you hear things like “We need to speed up testing,” or “BDD will improve our test automation efforts,” watch out.

Okay. If BDD is not about automation, what is it about?

It’s about BDD Conversations

When I interviewed Matt Wayne, author of The Cucumber Book, and asked him what the purpose of BDD is, he said the magic of BDD is that you can sit down with your customer and work together with them to describe the behavior you want the application to have.

You must have this conversation in regards to what exactly you want. Conversations like these tend to uncover all kinds of assumptions, misunderstandings and gaps between you and your customer’s understanding of the desired result. The beauty of it is that you get the chance to hash out and find bugs before you even sit down and actually write the code.

Focusing on conversations rather than being handed a sheet full of predefined requirements is probably a new concept to some teams; especially those teams that have started BBD and have also recently made a change from waterfall methodologies to Agile.

If you are on such a team, make sure to guard against things such as treating scenarios like requirements.

A scenario is not a requirement

One of the most difficult struggles I’ve had is to keep reminding my teams that a scenario is not a requirement, because doing so

can cause all kinds of issues. That’s why I usually ask my BDD guests about it. Many of them say that they don’t feel a scenario is a requirement as such. This usually comes up when we try and map these things back to some more conventional, old-school ways of thinking around requirements and things invariably tend to get a bit muddled.

Seb also mentioned that BDD quite blatantly came out of the Agile way of working.

A BDD feature is some type of capability that the product is going to offer. Within that feature, we then try and use stories in the Agile way of working to deliver small slices of functionality, and iteratively and incrementally build that feature up into something that is of value to the customer.

For each of those small stories, we have a discussion with the product owner, and that’s a meeting that, if you follow the BDD school of terminology, would be called the “Three Amigos” meeting.

In that meeting, we try and explore the story with concrete examples, possibly making the story smaller by splitting it into individual parts.

What we’re driving at here is to illustrate acceptance criteria, which are the business rules that scope that part of the feature.

They definitely mesh with requirements, but if you were to look at a requirement document that says the user should be able to do this and do that, there’s not necessarily a one-to-one mapping between those requirements expressed in a traditional way, and acceptance criteria expressed as rules that scope a user story.

When I asked Lance Kind this question on Twitter he replied,

A collection of scenarios define a requirement. Ask your group why they think scenarios to a requirement are one to one? Ask how many scenarios to handle a “login to Gmail” requirement.

In fact, Seb pointed out that businesses that only want a scenario to be a requirement is one of the main anti-patterns that he sees.

When people are getting starting with BDD, another common anti-pattern I see is making every scenario a UI test.

Don’t make everything a UI test

Often when people think about test automation they think of end-to-end, UI-based examples. This type of thinking tends to create slow, brittle tests.

In his book, Agile: Software Development Using Scrum, author Mike Cohn introduces the concepts of a test automation pyramid which is broken up into three sections. The largest, bottom section is made up of unit tests, the middle section is comprised of service tests, and the smallest, top piece consists of UI tests.

In my experience, tests below the UI level tend to run quicker and more reliably.

Also — just because BDD scenarios are written from a user’s point of view doesn’t mean the functionality needs to be tested directly from the UI.

It’s one thing to want to have test specification behavior written in an business readable fashion that most can interpret, and quite another to think you need to connect a test to the entire stack of the application in order to have confidence that when it passes the result can be trusted.

Granted, you definitely need a certain number of tests at the top of the pyramid that test the whole stack, but if you focus more on the lower levels of the automation pyramid, the closer you get to the actually key if statements that you can connect to any test, whether it’s business readable or not, the more reliable and faster and actually better the feedback is going to be.

The problem with multi-layered end-to-end tests, which test lots and lots of layers of the app and integration is that it’s really hard to know why they fail, right?

Don’t worry — you can still get the UI converge you need without running a bunch of slow UI tests. It can be done using a pattern that Seb Rose shared with me.

One way of going about it is by using a pattern known as presenter first pattern. It’s a modification of the MVC model, but essentially, if you draw out the MVC pattern as blocks and arrows, you can see that the view, which is your UI, has well-defined channels of communication with the model and the controller.

If you can replace those with models and controllers that your tests create and control, then there’s no reason you can’t just test that the UI behaves in the way that you want.

You can set your model and your controller to mimic all sorts of bizarre behaviors that may or may not happen in error conditions, when networks go down, or when someone pulls the plug out.

The pyramid does move from unit tests at the bottom, through integration tests, finishing with end tests at the top — which actually has nothing to do with BDD. End-to-end tests don’t necessarily involve the UI, and you should be able to unit test your GUI, because it’s just another component.

If you think back to MVC, the view is just a component. If you can stub out the model and the controller, there’s no reason you can’t test the UI as a component or as a unit within your system, thus enabling you to get repeatable, fast tests of your UI.

I think this is worth mentioning because quite often, people think the only way to test your UI is by spinning up the whole system and driving it as an acceptance test. The simple fact is that if you architect it in a way that allows you to substitute in mock controllers and models, there’s no reason at all why you shouldn’t be able to unit test or component test your UI.

Just Because you’re doing Scrum does not mean you’re doing Agile

I think Matt Wynne sums up the cause of many of the BDD anti-patterns I’ve discussed up to this point by reminding me that just because a company or team says that they are following Agile practices doesn’t mean that they are.

Matt says believes the main problem is that most teams that think they’re doing Agile are really just doing Scrum, and are not practicing test-driven development.

Sure, developers may write some unit tests as they go, but they probably don’t write them before they write the code, and they certainly don’t write any acceptance tests. If they do write tests that sort of test the whole system integrated they’re usually written afterwards — at the end of a sprint or even in the next sprint — and they’re usually written by a separate group within the team.

So a quality assurance person, a QA person rather than being written as a developer activity. And from my perspective, it’s that approach to quality assurance that is broken.

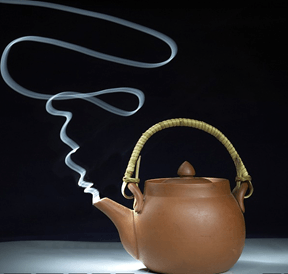

There’s this really old quote from a guy called Deming, who was a management consultant in the 60s comparing, contrasting the way that Japanese manufacturing worked in contrast to the way that US manufacturing worked at the time, and their approach to quality. And he said . . . he was laughing at the American approach to quality. He said, “Let’s make toast the American way. I’ll burn it, you scrape it.

Focus on creating clean BDD

Let’s stop taking perverted pleasure out of making burnt BDD toast.

Instead focus on creating clean BDD test by keeping your tests implementation-free, having conversations, not treating you BDD like an automation framework, not creating all UI tests and making sure you are following Agile. You’ll feel better about your tests and your users will love you for it.

Joe Colantonio is the founder of TestGuild, an industry-leading platform for automation testing and software testing tools. With over 25 years of hands-on experience, he has worked with top enterprise companies, helped develop early test automation tools and frameworks, and runs the largest online automation testing conference, Automation Guild.

Joe is also the author of Automation Awesomeness: 260 Actionable Affirmations To Improve Your QA & Automation Testing Skills and the host of the TestGuild podcast, which he has released weekly since 2014, making it the longest-running podcast dedicated to automation testing. Over the years, he has interviewed top thought leaders in DevOps, AI-driven test automation, and software quality, shaping the conversation in the industry.

With a reach of over 400,000 across his YouTube channel, LinkedIn, email list, and other social channels, Joe’s insights impact thousands of testers and engineers worldwide.

He has worked with some of the top companies in software testing and automation, including Tricentis, Keysight, Applitools, and BrowserStack, as sponsors and partners, helping them connect with the right audience in the automation testing space.

Follow him on LinkedIn or check out more at TestGuild.com.

Related Posts

Here’s the thing about API testing tools: the list has exploded. What used to be “Postman or SoapUI?” is now […]

After blogging about testing for over fifteen years, I realized something embarrassing a while back: I’d never actually sat down […]

While many testers only focus on browser automation there is still a need for Automating Testing Desktop Applications. Desktop automation […]

Bottom Line: Kobiton is the first real device testing platform I’ve seen that makes AI-powered mobile testing feel like it […]