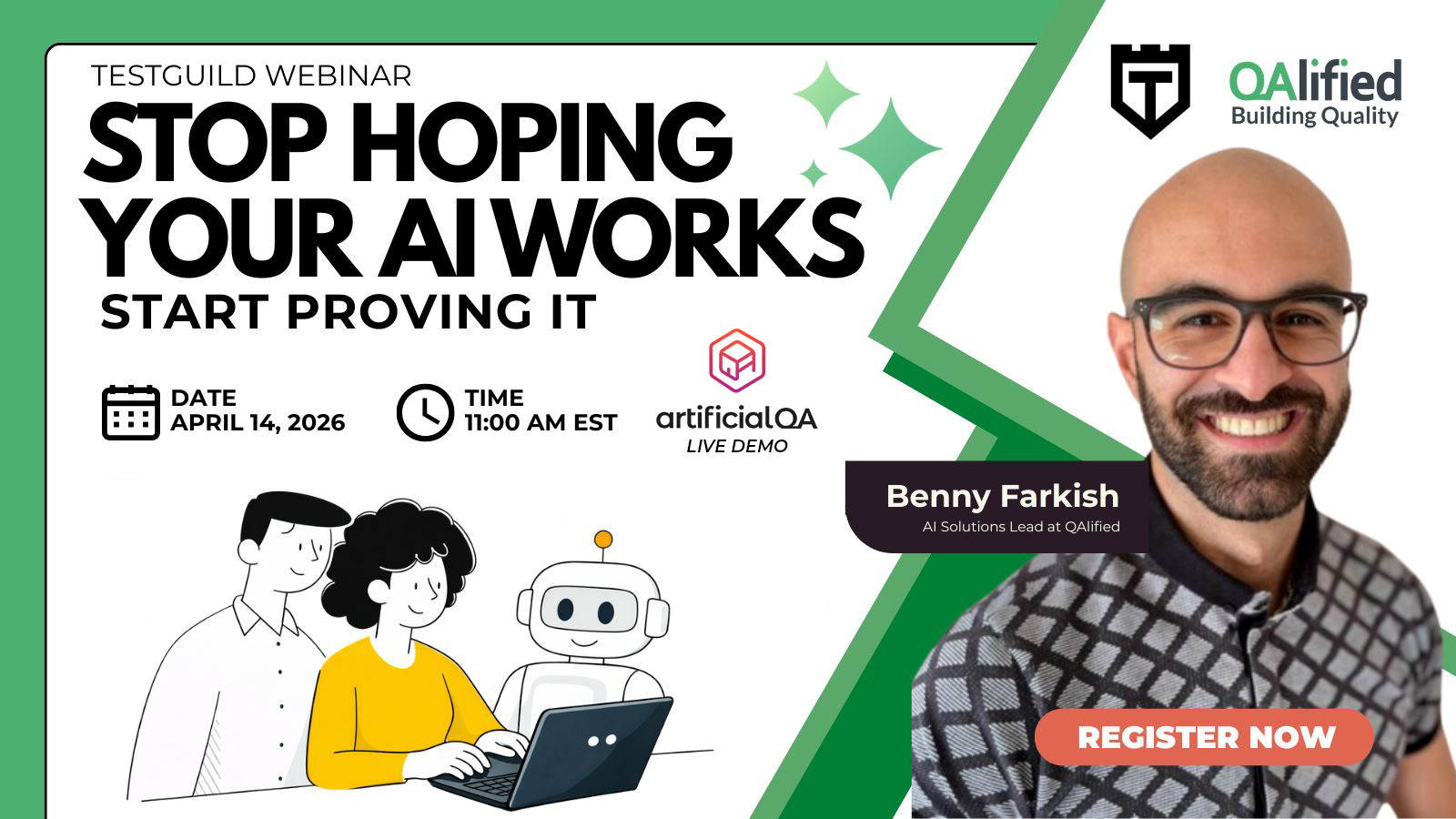

Stop Hoping Your AI Works. Start Proving It.

AI adoption is accelerating across industries — but how do you know your AI is actually giving the right answers? With non-deterministic outputs, traditional testing methods fall short, leaving organizations exposed to inaccurate responses, hallucinations, and compliance risks they can’t easily detect.

In this live session, QAlified will walk you through a practical, repeatable framework for validating AI-powered systems — from chatbots and copilots to any AI. You’ll see a live demo of ArtificialQA, the tool that systematically tests AI outputs using AI-based evaluators with configurable scoring.

What you’ll learn:

- How to define and measure AI accuracy using weighted, multi-evaluator scoring — beyond simple pass/fail.

- A repeatable methodology to test non-deterministic AI outputs (where the same question can produce different answers every time).

- How to build test suites that cover accuracy, safety, tone, and compliance — and run them automatically against any AI system.

- How to detect hallucinations, security leaks, and off-brand responses before your users do.

- ArtificialQA live demo: executing a full AI test plan and analyzing the results in real time.

Benny Farkish is AI Solutions Lead at QAlified, bringing over 13 years of experience in software testing and quality assurance. An engineering graduate from the University of Maryland, he has an extensive background in designing test strategies and leading both manual and automated testing initiatives. Benny currently focuses on the practical challenges of testing AI-integrated systems, working to ensure accuracy and reliability in products where traditional QA methods are no longer enough.

Discover practical techniques to ensure your AI performs reliably in real-world scenarios.

Checking your registration status...

© 2026 Test Guild. All rights reserved.